Enhancing UX Evaluation Through Collaboration with Conversational AI Assistants: Effects of Proactive Dialogue and Timing

Abstract

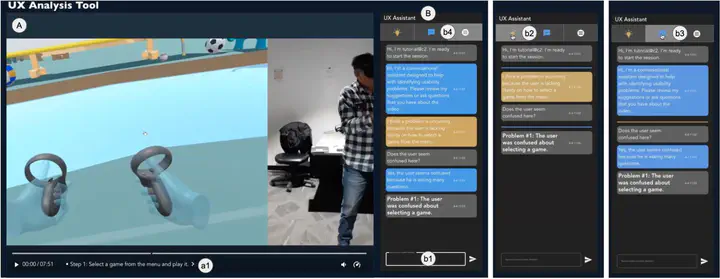

Usability testing is vital for enhancing the user experience (UX) of interactive systems. However, analyzing test videos is complex and resource-intensive. Recent AI advancements have spurred exploration into human-AI collaboration for UX analysis, particularly through natural language. Unlike user-initiated dialogue, our study investigates the potential of proactive conversational assistants to aid UX evaluators through automatic suggestions at three distinct times, before, in sync with, and after potential usability problems. We conducted a hybrid Wizard-of-Oz study involving 24 UX evaluators, using ChatGPT to generate automatic problem suggestions and a human actor to respond to impromptu questions. While timing did not significantly impact analytic performance, suggestions appearing after potential problems were preferred, enhancing trust and efficiency. Participants found the automatic suggestions useful, but they collectively identified more than twice as many problems, underscoring the irreplaceable role of human expertise. Our findings also offer insights into future human-AI collaborative tools for UX evaluation.